Defence & Government Recognition

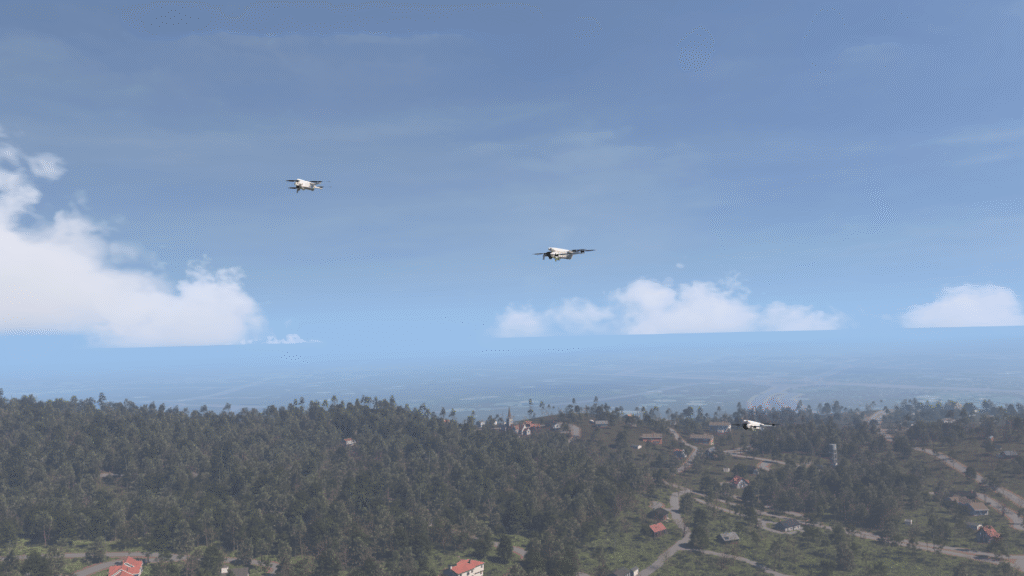

AI Verse is a NATO DIANA (Defence Innovation Accelerator for the North Atlantic) company, selected to develop dual-use synthetic image dataset technology for Allied defence AI applications. DIANA is the NATO flagship initiative connecting leading deep tech startups with Allied defence programmes. AI Verse also was mentioned in the speech by the President Emmanuel Macron during his speech at Adopt AI, recognizing AI Verse as a national technology champion. The company is backed by Supernova, Innovacomm, and Bpifrance. These credentials reflect AI Verse standing as a trusted provider of physics-based synthetic RGB and infrared training data for defence, aerospace, and drone manufacturers across Europe.